Cloudflare BYOIP Outage Drops 1100 Prefixes

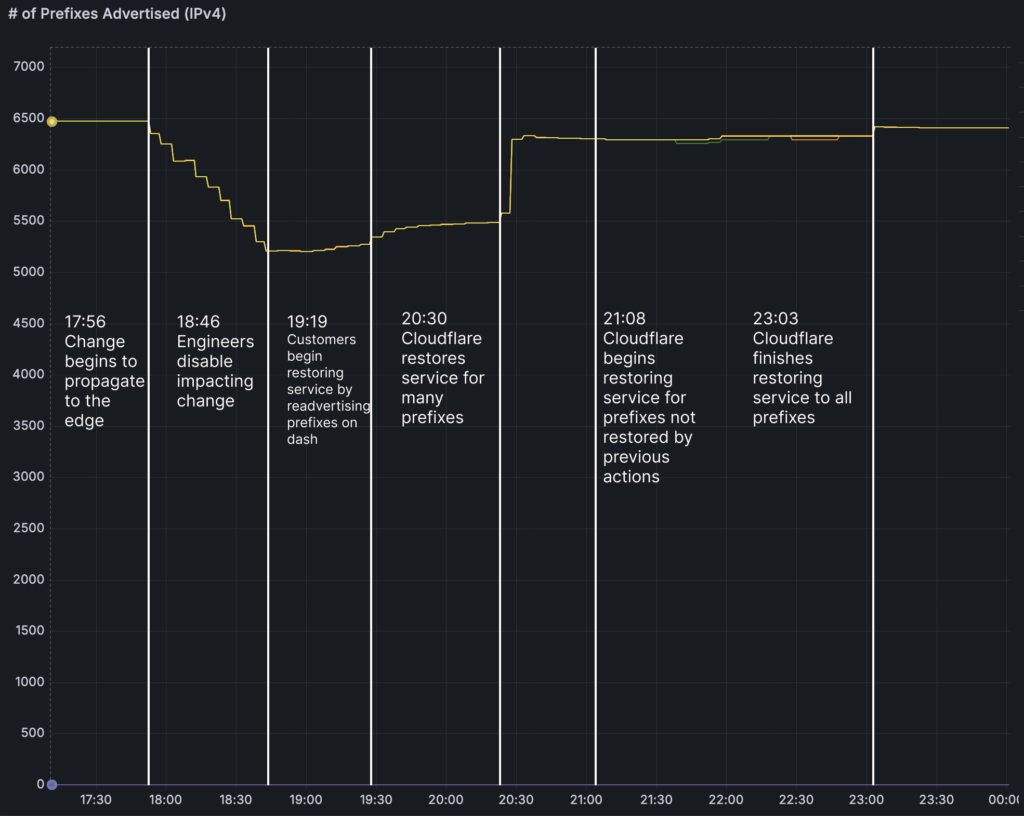

Enterprises using Cloudflare’s Bring Your Own IP (BYOIP) service encountered widespread unavailability during the Cloudflare BYOIP outage starting February 20, 17:48 UTC. A configuration tweak in IP management triggered unintended BGP withdrawals, rendering applications unreachable and causing connection timeouts globally. Impacted roughly 25% of 4,306 BYOIP prefixes out of 6,500 total, the event lasted 6 hours 7 minutes without cyber involvement.

Trigger of Cloudflare BYOIP Outage

Engineers aimed to automate prefix removal in BYOIP via a sub-task querying the Addressing API with “pending_delete” parameter. A bug returned all prefixes instead of pending ones, queuing mass deletions and withdrawals. Propagation hit edge servers, affecting CDN, Spectrum, Dedicated Egress, and Magic Transit traffic attraction.

Partial rollout limited scope before revert at 18:46 UTC, averting total disruption.

Affected Services and Path Hunting

BYOIP-reliant traffic failed to route to Cloudflare edges, inducing BGP path hunting—repeated route hunts ending in timeouts. 1.1.1.1 site returned 403 “Edge IP Restricted” errors, though DNS resolution persisted. Services like Core CDN saw connection failures, Spectrum proxy halts, egress blocks, and Magic Transit timeouts.

Some dashboard toggles restored prefixes; others needed manual bindings recreation due to software bugs removing configs.

Addressing API Role

The API serves as IP address truth source, syncing changes instantly to the global network. Customer signals update databases triggering BGP and bindings. Automation push under Code Orange: Fail Small sought to eliminate manual deletions, but staging data inadequately simulated production scale, and tests missed independent task execution.

Recovery varied: withdrawals toggled via dashboard; deleted bindings required global configs.

Timeline of Cloudflare BYOIP Outage

The following table captures key milestones from code merge to resolution.

| Time (UTC) | Status | Description |

|---|---|---|

| 2026-02-05 21:53 | Code merged | Broken sub-process integrated |

| 2026-02-20 17:46 | Deployed | API release activates task |

| 2026-02-20 17:56 | Impact begins | Withdrawals propagate |

| 2026-02-20 18:46 | Mitigated | Task terminated |

| 2026-02-20 19:19 | Partial recovery | Dashboard self-mitigation |

| 2026-02-20 23:03 | Resolved | All prefixes restored |

This table details the 6+ hour progression per Cloudflare postmortem.

Cloudflare BYOIP outage underscores config deployment risks in edge networks.

Remediation Under Code Orange

Post-incident plans standardize API schemas for flag validation, separate operational/config states with snapshots for mediated rollbacks, and circuit breakers halting rapid withdrawals. Health metrics will gate deployments, aligning with controlled rollouts and failure mode testing.

Staging enhancements and full test coverage prevent recurrence.

The Cloudflare BYOIP outage disrupted service availability for select customers, amplifying path hunting latencies and failures across protected apps. No attack vector exploited, but it highlights infrastructure fragility to internal changes. Planned automations and breakers per vendor postmortem enhance future resilience.

No Comment! Be the first one.