Anthropic MCP Hit by Flaw Enabling Remote Code Execution

A critical architectural vulnerability discovered in Anthropic’s Model Context Protocol (MCP) could expose hundreds of thousands of AI systems to remote code execution, according to new research from OX Security.

This flaw is built into the official MCP SDK, affecting every supported programming language, including Python, TypeScript, Java, and Rust.

OX Security researchers estimate the vulnerability touches over 150 million downloads, more than 7,000 publicly accessible servers, and up to 200,000 vulnerable instances globally.

Anthropic MCP Vulnerability

OX Security’s research identifies four distinct attack families that threat actors can leverage:

- Unauthenticated UI Injection in widely used AI frameworks

- Hardening Bypasses in environments marketed as “protected,” including Flowise

- Zero-Click Prompt Injection targeting popular AI IDEs like Windsurf and Cursor

- Malicious Marketplace Distribution, with 9 out of 11 MCP registries successfully poisoned during testing

Researchers confirmed successful command execution on six live production platforms, including LiteLLM, LangChain, and IBM’s LangFlow tools, which are widely used across enterprise AI deployments.

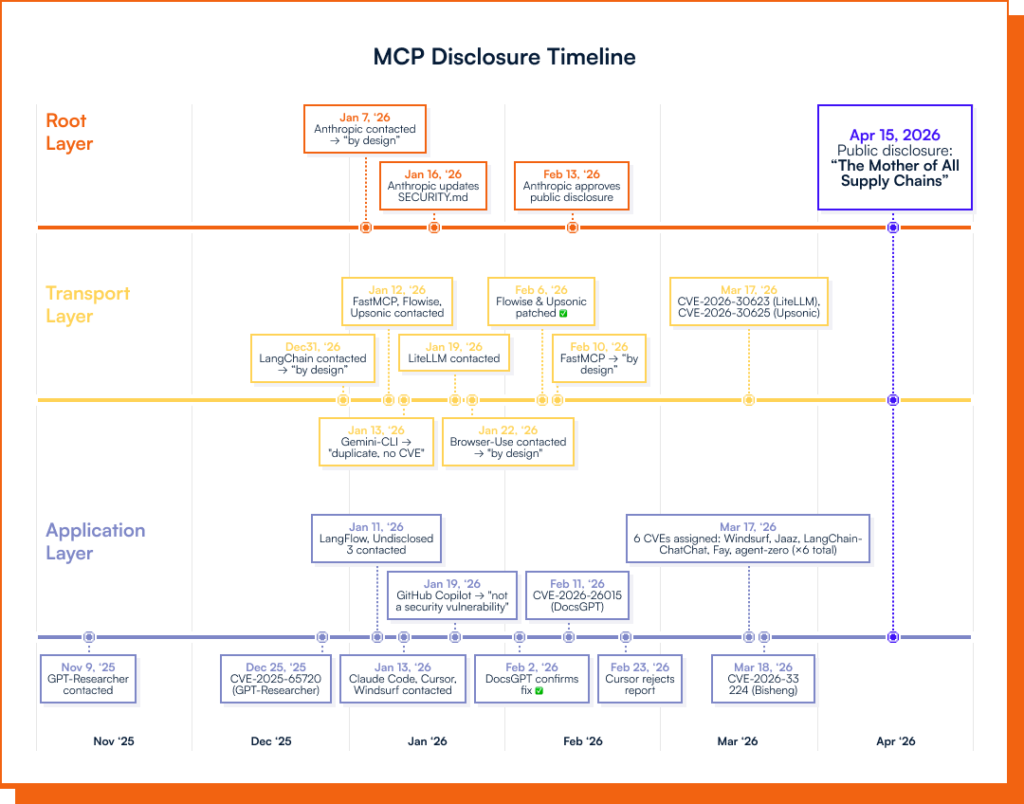

The research has identified at least 10 CVEs with critical and high severity ratings. Affected products include GPT Researcher (CVE-2025-65720), LiteLLM (CVE-2026-30623), Agent Zero (CVE-2026-30624), Windsurf (CVE-2026-30615), DocsGPT (CVE-2026-26015), and several others.

Attack vectors range from unauthenticated UI injection and authenticated RCE via JSON configuration to zero-click prompt injection leading to local code execution.

LiteLLM, Bisheng, and DocsGPT have released patches; the remaining disclosures are still in reported status, awaiting fixes.

OX Security says it repeatedly urged Anthropic to issue root-level patches that would have protected millions of downstream users simultaneously.

Anthropic declined, characterizing the behavior as “expected” by design. The firm subsequently notified Anthropic of its intent to publish, and Anthropic did not object.

Through more than 30 responsible disclosures, OX Security has worked to patch individual downstream projects, but the underlying protocol vulnerability remains unaddressed. Anthropic recently unveiled Claude Mythos, a tool designed to help secure the world’s software.

OX Security’s research serves as a direct call for Anthropic to apply that same security-first commitment to its own protocol infrastructure, specifically citing CISA’s “Secure by Design” principles.

Mitigations

Security teams and developers building on MCP-based frameworks should act on the following guidance immediately:

- Block public internet exposure for AI services connected to sensitive APIs and databases

- Treat all external MCP configuration input as untrusted; never allow raw user input to flow into

StdioServerParametersor equivalent functions - Install MCP servers exclusively from verified sources such as the official GitHub MCP Registry.

- Run MCP-enabled services inside sandboxed environments with restricted shell and disk permissions.

- Monitor all tool invocations for unusual background activity or data exfiltration attempts.

- Update all affected services to their latest patched versions, and turn off unpatched services that accept user input.

OX Security has also shipped platform-level protections for its customers, including detection of improper STDIO-based MCP configurations in AI-generated code and flagging of existing vulnerable patterns in customer codebases.

No Comment! Be the first one.